Dark PR Exposed: The Hidden World of Reputation Manipulation

Shadowy agencies now weaponize AI, sockpuppet networks, and hack-and-leak operations to demolish reputations — and the industry is growing faster than most people realize.

- Dark PR services are available for as little as $100, making reputation attacks accessible to virtually anyone.

- Over 65 firms have been identified offering computational propaganda services, with activity documented across 81 countries (Oxford Internet Institute, 2021).

- Attacks now use AI-generated deepfakes, bots, fake reviews, and hacked data to manufacture damaging narratives at scale — and deepfake fraud incidents increased by 257% in 2024 alone.

- Even major brands like Nike have suffered measurable stock price drops from coordinated dark PR campaigns.

- Recognizing the tactics — deepfake impersonation, sockpuppets, manufactured outrage, leaked documents — is essential to mounting a defense.

Dark PR has evolved from basic smear campaigns into a sophisticated, for-hire industry that uses AI-generated deepfakes, sockpuppet accounts, and stock manipulation to destroy reputations. Services start at just $100, and a convincing deepfake can be created from just three seconds of audio. These tools are available to anyone, from corporations to political groups to individuals seeking revenge. Understanding how these attacks are orchestrated — and how AI has accelerated them — is the first step toward defending against them.

Dark PR firms now charge just $100 to destroy a competitor’s reputation online. This shadowy industry has exploded — and the rise of AI-generated deepfakes has made it more dangerous than ever.

These reputation attacks pack a devastating punch. Bear Stearns’ stock crashed during its 2008 takeover due to “short and distort” tactics. Nike’s share price fell 3.2% in September 2018 after attackers targeted its Colin Kaepernick ad campaign. In early 2024, a finance worker at engineering firm Arup was tricked into wiring $25 million after a deepfake video conference impersonated the company’s CFO and multiple senior executives. Companies, political groups, and government bodies like the NSA have turned negative PR into a weapon to discredit their targets. For example, programs such as COINTELPRO, run by the FBI, were designed to “expose, disrupt, misdirect, or otherwise neutralize” groups and individuals considered subversive.

Who launches these attacks and what drives them? This piece pulls back the curtain on reputation manipulation. Specialized agencies deploy AI misinformation, sockpuppet accounts, and stock manipulation techniques. BellTrox InfoTech Services shows how professional deception has become in the digital world – they hacked over 10,000 rival email accounts systematically.

What is Dark PR and Why It Matters

The shadowy world of dark PR stands in deep contrast to ethical communications practices. Traditional reputation management and public relations build reputations, but dark PR (also called negative PR, black PR, or black hat PR) aims to destroy a target’s reputation through unethical means. This practice has evolved from basic smear campaigns into a sophisticated industry that uses specialized agencies and a digital toolbox.

What Is Dark PR? Definition and Key Differences from Negative PR

Dark PR covers any communication effort that aims to damage, discredit, or destroy competitors through engineered smear campaigns. These campaigns don’t just highlight negative aspects of competitors — they create and spread falsehoods. The tactics are diabolical. They often start with strategically placed rumors and half-truths that grow into reputation-destroying narratives.

Dark PR becomes more dangerous because of its secretive nature that relies on the built-in anonymity of the internet. Industry research shows practitioners use industrial espionage, social engineering, competitive intelligence, and IT security exploits. Specialized agencies work behind these operations for clients of all types — from companies seeking competitive advantage to political entities trying to shape public opinion to acts of revenge against an ex. This stands in sharp contrast to ethical reputation management practices, which aim to build and protect credibility through transparent, honest communication.

Dark PR vs. Traditional PR: How They Differ

Traditional PR and specialized reputation management builds and maintains positive relationships between organizations and their publics through honest communication. Dark PR works against the ethical standards that guide legitimate public relations.

Traditional PR values truth and transparency, while dark PR militarizes disinformation into a weapon. One industry expert points out that “the relationship should be one of nullity, i.e., the ethical component of public relations precludes engaging in communicative activities founded on disinformation”. In spite of that, agencies keep emerging that specialize in destroying rather than improving reputations.

The methods show dramatic differences too. Traditional PR builds credibility through press releases, media relations, and content marketing. Dark PR uses:

- Fake news and manufactured outrage

- Manipulation of product reviews and search results

- Unauthorized leaks of confidential information

- Paid negative influencer campaigns

- Creation of sockpuppet accounts and bots to manipulate Wikipedia

Why Dark PR Is Growing: The Rise of Disinformation-as-a-Service

Dark PR thrives in our increasingly digital landscape. According to the Oxford Internet Institute’s 2021 report — the most recent comprehensive global inventory — independent researchers found computer-generated propaganda in 81 countries. Specialized firms provided these services in 48 of those countries. Researchers have identified more than 65 companies offering computational propaganda services over the last several years and that’s just the ones we know about.

These services have become frighteningly accessible. Anyone can buy negative comments, ratings, or posts in social networks for under $100. This “Disinformation as a Service” (DaaS) market runs primarily on the dark web, making these destructive tools available to everyone — from competing companies to disgruntled employees.

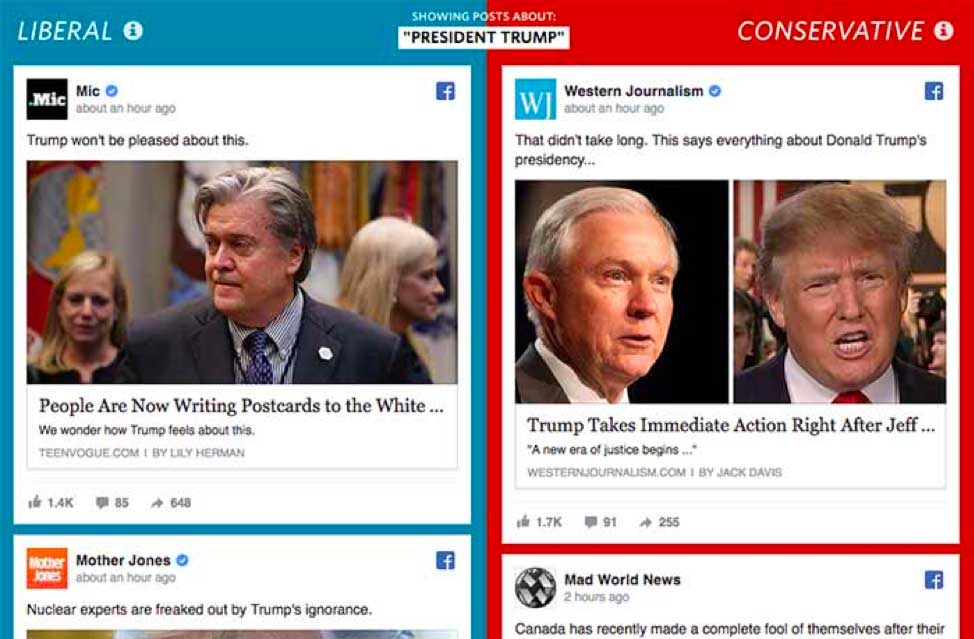

Social media platforms have incubated perfect environments for dark PR to thrive. Trolls (both human and bot) make dark PR measures simple to implement with minimal technical knowledge. That, and the fact that people tend to believe information that matches their existing beliefs, make these tactics surprisingly effective.

Dark PR campaigns can devastate organizations. Beyond damaging reputations, they can crash stock prices and lead to lost locations and jobs. The industrialization of disinformation — now supercharged by generative AI — poses one of the biggest challenges for legitimate businesses and public discourse heading into 2026.

Inside the Toolbox: Tactics Used by Dark PR Agencies

The tools used by dark PR operatives have grown more sophisticated over the years, especially with the seemingly sudden advent of artificial intelligence. Specialized agencies now use advanced techniques to shape public opinion. Their tactics range from AI-powered fake content to market manipulation — all meant to damage reputations with precision, providing solid ROI to their customers.

Get a Free Reputation Assessment

Find out what people see when they search for you online. No obligation — results in 24 hours.

AI-Generated Fake News and Deepfake Attacks

AI has become the ultimate weapon to spread false information. New machine learning tools can create fake content that looks just like real news. According to the Oxford Internet Institute (2021), researchers found computer-generated propaganda in 81 countries. Specialized firms offered these services in 48 of those countries. In 2023, Wharton professor Ethan Mollick demonstrated that anyone could create a convincing deepfake video in just eight minutes for $11 using off-the-shelf AI tools, as documented by NPR. By 2025, the barrier has dropped even further — scammers need as little as three seconds of audio to create a voice clone with an 85% match to the original speaker, according to McAfee (2024).

Dark PR agencies make use of this technology to create fake stories that blend naturally with real reporting. Researchers found an AI-generated article about Benjamin Netanyahu’s psychiatrist. The article claimed the psychiatrist left a note that implicated the Israeli prime minister — a story that was completely false. These fake stories look more credible next to real content on what seem like trustworthy websites.

Deepfake technology has dramatically escalated the threat since this post was first written in 2018. Where dark PR once relied on text-based misinformation, attackers can now generate realistic video and audio of executives, politicians, and public figures saying things they never said. In February 2024, engineering firm Arup lost $25 million when an employee was deceived by a multi-person deepfake video conference that convincingly impersonated the company’s CFO and other senior executives, as reported by The Guardian. According to the Deloitte Center for Financial Services, generative AI-enabled fraud losses in the U.S. are projected to climb from $12.3 billion in 2023 to $40 billion by 2027. The implications for reputation attacks are clear: fabricated video of a CEO making inflammatory statements, a fake earnings call, or a doctored product recall announcement can cause irreversible damage before the target even knows it exists.

Social media bots and sockpuppets

Bots and fake accounts serve as digital foot soldiers in dark PR campaigns. They make up between 5% and 50% of social media discussions, depending on the topic. These automated accounts serve several significant functions:

- They magnify messages to create false consensus (astroturfing)

- They breach digital social networks to spread false information faster

- They interact with humans in simple ways to look real

- They trick algorithms to make content trend

Sockpuppet accounts — fake personas with believable profiles — create an illusion of widespread support or opposition. Research shows bots participate in up to 20% of social media conversations. Pre-acquisition studies estimated bots created between 20.8% and 29.2% of Twitter content. Since Elon Musk’s 2022 acquisition and rebranding to X, the bot landscape has shifted but remains contested: a 2024 analysis by 5th Column AI estimated that approximately 64% of the 1.269 million X accounts it analyzed showed bot-like behavior, though a separate study published in Nature (2025) aligned more closely with earlier estimates of around 20%. What’s clear is that automated manipulation on social platforms remains a persistent and growing problem regardless of which estimate is closer to reality.

Wikipedia manipulation and SEO attacks

Companies often try to manipulate their Wikipedia information. Research by Der Spiegel (2012) showed manipulation attempts affected one in three Wikipedia articles about major German corporations. PR agencies write and change entries for their clients, which creates big problems for Wikipedia’s community. To understand how propaganda enters Wikipedia through biased editors, see our detailed analysis of how propaganda enters Wikipedia through biased editors.

SEO attacks try to control what people find during online research about a company or person. This “information laundering” happens through questionable content on less trusted websites. Other media then report on those reports, which protects established outlets from having to retract stories.

Short and distort strategies

Market manipulation serves as another dark PR tactic. “Short and distort” means betting against a stock and spreading false rumors to lower its price. At Reputation X, we have had to work hard to reverse the effects of short and distort strategies — a dynamic explored in depth in our guide to corporate reputation warfare. Traders bet against companies before spreading negative stories through social media, spam email, and fake news.

Paul Berliner, a Wall Street trader, faced securities fraud charges in 2008. He spread false rumors about Alliance Data Systems while betting against their stock. The SEC made him pay a $130,000 fine and give up over $26,000 in profits. Financial lies can shake markets quickly — like the time a fake Pentagon explosion photo made the S&P 500 drop by three-tenths of a percent in 2023.

Hacking and unauthorized leaks

Hack-and-leak operations make use of real documents from cyberattacks. They release this information strategically to shape media coverage and hurt their targets. These operations use real information but often mix in “tainted leaks” that combine true and false content.

Reuters discovered an FBI investigation of an ExxonMobil consultant. The consultant allegedly helped plan a hack-and-leak operation targeting environmental activists. The plan listed hacking targets and hired an Israeli private detective to do the work. They leaked the information strategically to weaken lawsuits against energy companies.

Dark ads and microtargeting

Dark ads — paid social media posts that only specific audiences see — help attack reputations effectively. Unlike regular ads, these posts never show up on the advertiser’s social media pages. This makes them almost impossible to track. Attackers can spread harmful content to exact demographic groups without anyone else seeing it.

These ads promote negative ideas in closed communities. They use people’s confirmation bias to strengthen damaging stories. They’re hard to monitor and look like organic posts instead of paid content. The hidden nature of these ads makes them very effective at spreading false information without consequences.

State-Sponsored Dark PR: Government Disinformation Operations

The campaigns these agencies design are both ingenious and diabolical in nature. It starts with a rumor told here, a half-truth there. Then documents magically show up that are seemingly innocuous at first, but when combined with bombshells to come, become the lynchpins in a string of false logic bombs.

With a little belief and enough circumstantial evidence, people with even the smallest affinity for the message of the lie will swallow it hook, line, and sinker, especially if the message confirms what they already believe. This is called confirmation bias.

Below is an image of how social media creates an information bubble catered to our existing beliefs, thereby reinforcing our existing bias. This psychological trick is commonly exploited by perpetrators of fake news.

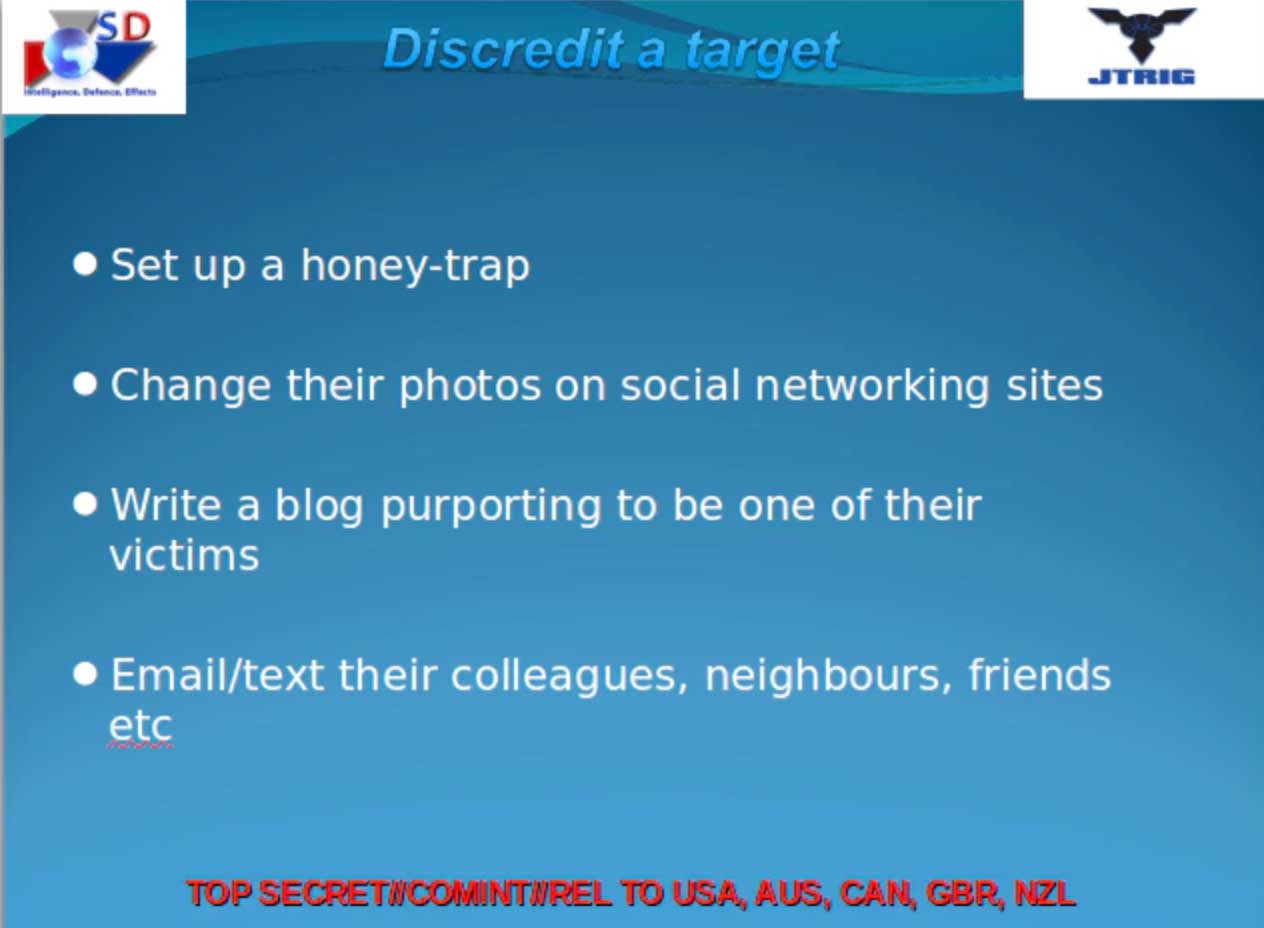

Western spy agencies like Britain’s GCHQ and the NSA apparently devote significant manpower and resources to online “intelligence” operations that consist of negative SEO tactics aimed at enemies of the state. They use fake names, hidden IP addresses, planted news stories, and other smoke-and-mirror tactics to publish damning or unflattering content about their targets.

They can even go as far as to plant fake evidence, which is later “discovered” by an anonymous source and fed to the press at an opportune time. A few Google searches will verify it as “true,” and since people hold what Google presents in such high esteem, it is all the easier to believe.

Who Uses Dark PR and What Do They Want?

Dark PR campaigns don’t appear out of thin air – they serve clients with clear objectives and motives. Organizations from all sectors launch reputation attacks, each with unique reasons to deploy these harmful strategies.

Corporations targeting competitors

Corporate dark PR has grown from basic smear campaigns into a sophisticated money-making machine. Specialized firms now help companies attack their rivals through coordinated campaigns. BellTrox InfoTech Services in India helped clients hack over 10,000 competitor email accounts during a seven-year period. Their targets ranged from hedge funds to law firms, and even Wall Street Journal and Financial Times reporters. Many blue-chip American and European companies reportedly used their services.

Companies now regularly use “short and distort” strategies to sway market opinions. Nike learned this lesson when their Colin Kaepernick advertisement sparked a boycott campaign in September 2018. The company’s shares dropped 3.2% just one day after the campaign launch.

Political parties and governments

Russia’s political sphere gave birth to dark PR before it spread worldwide. Politicians and governments now use these tactics to shape public conversations. Puerto Rican journalists discovered former governor Ricardo Rosselló’s connection to KOI, a marketing firm. KOI ran social media campaigns that promoted pro-government messages while attacking opponents.

Marketing companies like Smaat worked with the Saudi Arabian government, as revealed through leaked documents. The advertising agency Panda ran similar operations for Georgia’s government.

Private individuals and influencers

Social media influencers play a vital role in dark PR operations. Researchers found a company called Influenceable that connects corporations with content creators who share their ideology. The soft drink lobby paid influencers six-figure sums through this firm. These creators reframed public health debates as freedom issues.

The Democratic National Committee maintains a network of paid influencers. These individuals promote aligned messages while creating fake grassroots support, according to recent leaks.

Motivations: power, profit, revenge

Power, profit, and revenge drive dark PR campaigns. Corporate attackers usually want a competitive edge. They target rivals during lawsuits, corporate takeovers, or when companies try to raise capital or launch IPOs.

Dark PR’s financial rewards make it hard to resist. One agency director admitted these services tempt firms because of massive profit potential. Political players use these tactics to control stories, silence opposition, or boost dissent. Public opinion becomes a weapon for winning elections or influencing policy.

Real-World Examples of Reputation Manipulation

Dark PR operations on the ground reveal a disturbing digital world where reputation attacks create lasting damage. These cases show how this shadow industry works beyond theoretical concepts.

Bear Stearns and financial rumor campaigns

The 2008 collapse of Bear Stearns represents the destructive power of rumor-driven attacks. Bear Stearns survived the Great Depression and 9/11 attacks but fell victim to what Wall Street veterans call a coordinated “bear raid.” Rumors about liquidity problems spread faster through CNBC coverage that kept discussing “credit issues at Bear” without evidence. Major hedge funds started pulling their money out, which created an unstoppable spiral. Investigators found a flood of “novation” requests — attempts to transfer credit risk away from Bear — pointing to a coordinated attack on the firm’s reputation. Bear Stearns’ stock crashed within days, leading to a government-backed sale to JPMorgan at just $2 per share.

Imaginary Kuwait Operation ‘Nanny Goat’

Note: While the previous examples are documented incidents, the following illustrates a theoretical scenario — a social experiment presented at DEF CON 24 — that demonstrates how a coordinated dark PR campaign could destabilize an entire country.

To get a good idea of how a nation could be affected we can look at a social experiment called “Operation Nanny Goat” — a non-government hacker team’s fake takedown of Kuwait’s prime minister. In this imaginary scenario, the hackers “broke into” ISPs, banks, media outlets, and the city’s water system. They moved government funds to the PM’s controlled accounts and created fake evidence of corruption. The attackers cut off the city’s water supply and paid protesters to demonstrate. Some people dressed as police attacked these same protesters — a calculated deception campaign to destabilize leadership.

BellTrox and corporate espionage

BellTrox InfoTech Services, based in New Delhi, targeted over 10,000 email accounts between 2013–2020. Their victims included government officials, gambling tycoons, investors, and environmental groups. This “hack-for-hire” firm used clever phishing campaigns with emails that looked like they came from colleagues, friends, or adult content notifications. BellTrox’s director Sumit Gupta, previously charged in California for similar crimes, ran the business openly with the slogan “you desire, we do!” The firm kept operating despite legal pressure, showing the ongoing threat from cyber mercenaries.

Pragmatico and election interference

This is an obvious one, but Russian election interference shows how foreign powers use dark PR tactics. Modern Russian campaigns target existing social divisions instead of using traditional propaganda. They inject false information into political discussions and promote conspiracy theories through social media’s reach. These operations run on small budgets — experts describe them as “low-rent, on-the-cheap, fly-by-night” efforts meant to create confusion rather than destroy democratic systems.

AI Deepfakes and Reputation: Recent Cases

The rapid evolution of deepfake technology has opened an entirely new front in reputation manipulation. These cases from 2024–2025 show how AI-generated audio and video are being weaponized against organizations and individuals.

Arup — $25 million deepfake video conference (February 2024). In one of the largest known deepfake fraud cases, a finance worker at British engineering firm Arup was tricked into transferring $25 million after joining a video conference call where every other participant — including the company’s CFO — was an AI-generated deepfake. As reported by The Guardian, the attackers used publicly available video and audio of Arup executives from YouTube to generate convincing likenesses. The employee believed the call was legitimate and authorized 15 separate transactions to five Hong Kong bank accounts.

Ferrari — CEO voice cloning attempt (July 2024). Criminals used AI to clone the voice of Ferrari CEO Benedetto Vigna and attempted to deceive a senior executive into authorizing a fraudulent transaction. The target grew suspicious when the impersonator couldn’t answer a personal verification question that only the real CEO would know. Ferrari’s quick-thinking employee prevented any financial loss, but the incident underscored that even the most sophisticated organizations are now targets for AI-powered impersonation.

AI robocall impersonating Joe Biden (January 2024). During the 2024 New Hampshire Democratic primary, voters received an AI-generated robocall mimicking President Biden’s voice, urging Democrats not to vote. The incident demonstrated how deepfakes are being deployed not just for financial fraud but to manipulate elections and public discourse — the core functions of political dark PR. WIRED’s AI Elections Project subsequently tracked at least 78 election-related deepfakes globally throughout 2024.

These cases represent just the tip of the iceberg. According to Sumsub, North America experienced a 1,740% increase in deepfake fraud between 2022 and 2023. Data from Resemble AI and the AI Incident Database shows that deepfake incidents surged from just 22 total between 2017 and 2022, to 150 in 2024, to 179 in the first quarter of 2025 alone — surpassing the entire previous year in just three months. According to the Deloitte Center for Financial Services, AI-enabled fraud losses in the U.S. are projected to reach $40 billion by 2027.

How to Protect Your Brand from Dark PR Attacks

Dark PR tactics have become more sophisticated. Organizations need reliable defenses to protect their reputation. A mix of active monitoring, strategic planning, and quick response can shield your brand from malicious attacks.

Early detection and web monitoring

AI-powered monitoring tools now serve as a critical first line of defense by continuously watching your digital presence. Platforms like Brandwatch and Mention track consumer feedback and brand mentions across social media, news outlets, forums, and review sites, spotting reputation risks early. For example, AI can detect growing complaint patterns or sudden spikes in negative sentiment on social media that allow quick intervention before a coordinated attack gains momentum.

Your protection must extend beyond regular channels to include dark web monitoring for credential leaks or planned attacks. The urgency of early detection has increased: according to Mandiant’s M-Trends 2025 report, the global median dwell time for cyber threats — the time between initial compromise and detection — is now 11 days, down dramatically from approximately 200 days a decade ago (Mandiant, 2025). While this improvement reflects better detection overall, it also means attackers now move faster and cause damage more quickly, making real-time monitoring essential rather than optional.

Reputation management best practices

Good reputation management practices depend on constant watchfulness and active involvement:

- Set up Alerts (TalkWalker, Google, etc.) for brand-related keywords to get instant updates

- Keep local listings accurate and consistent on all digital platforms

- Reply quickly to all reviews — good and bad — since according to BrightLocal’s 2026 Local Consumer Review Survey, 98% of consumers at least occasionally read online reviews. Keep responses to bad news short, good news long (for SEO reasons)

- Publish quality content regularly to control your online narrative

- Prepare pre-written crisis response templates that can be adapted quickly when an attack is detected, so your team isn’t drafting communications from scratch under pressure

Crisis response planning

If your firm is of a certain size, your organization probably needs a dedicated crisis management team with clear roles. Create a detailed crisis plan that spells out communication rules and response steps. For a comprehensive guide to this process, see our post on building a reputation crisis plan. Regular practice sessions help test your team’s preparedness. Companies that run crisis drills handle real threats more successfully. Staying transparent during any crisis matters most because silence makes public opinion worse.

Legal and ethical countermeasures

Media-specialist lawyers should be ready to contact publications on legal grounds when dark PR attacks happen. Keep evidence that disproves false claims – it helps brief journalists and update stakeholders. Sometimes you might need lawyers to file defamation suits against known attackers.

As a first-line defense before legal action, use platform reporting mechanisms to flag fake accounts, impersonation profiles, and coordinated inauthentic behavior directly to X (formerly Twitter), Meta, and Google. These platforms have dedicated teams and processes for removing fraudulent content, and a well-documented report filed quickly can contain damage while your legal team prepares a broader response.

The strongest defense against reputation attacks combines legal action with open communication. For organizations recovering from an attack, our guide on how to rebuild reputation after a crisis provides a practical framework for restoring trust.

Conclusion

Dark PR has become a serious threat. It consists of shadowy tactics used by specialized agencies that charge as little as $100 to destroy reputations and manipulate public perception. The business of disinformation has grown into a sophisticated model, with over 65 companies offering computational propaganda services according to the Oxford Internet Institute (2021). The rise of AI-generated deepfakes has made these attacks faster, cheaper, and harder to detect — as the $25 million Arup incident in 2024 demonstrated with devastating clarity.

Cases like Bear Stearns’ collapse, BellTrox’s corporate espionage operations, and the wave of deepfake CEO fraud in 2024–2025 show damage way beyond reputation loss. Companies watch their stock prices plummet, businesses fail, and real people lose their jobs. The attacks keep getting more sophisticated with AI-generated deepfakes, hack-and-leak operations, and social media manipulation. Yet organizations shouldn’t feel helpless.

Protection starts with alertness and good preparation. Early detection systems, detailed web monitoring, and crisis response planning reduce vulnerability to reputation attacks substantially. Strong legal countermeasures and transparent communication give essential protection against even the most coordinated disinformation campaigns.

The fight against dark PR comes down to awareness. Companies that understand the tactics, perpetrators, and motivations behind reputation attacks build stronger defenses. Dark PR practitioners keep evolving their methods. Yet organizations that focus on reputation management and stick to ethical communication standards are better prepared to handle these threats. Truth is, while bad actors can twist information for a while, real credibility built on honest relationships and transparency remains the best shield against reputation manipulation.

Statistics are drawn from the Oxford Internet Institute, Mandiant, BrightLocal, Sumsub, Deloitte, McAfee, and other independent researchers, academic studies, and industry reports cited within the article.

Frequently Asked Questions

Protect Your Online Reputation

Every day you wait, negative content gets stronger. Talk to our experts about a custom strategy for your situation.

Get Your Free Analysis