The Big Fat Guide to Mastering Wikipedia’s Editing Rules

Learn how to navigate Wikipedia's strict editing policies with confidence, from sourcing standards to conflict of interest rules, without losing your work.

- All Wikipedia content must meet three core standards: Neutrality, Verifiability, and No Original Research.

- Only cite reliable, published third-party sources—blogs and press releases will get your edits removed.

- Write in a neutral tone; promotional or biased language triggers editor scrutiny and deletion.

- Disclose conflicts of interest and use talk pages to collaborate rather than editing unilaterally.

- Wikipedia runs on community consensus—working with editors, not around them, is essential.

Wikipedia's editing rules are strict, specific, and unforgiving for those who don't understand them. This guide breaks down the platform's core content policies—Neutrality, Verifiability, and No Original Research—and explains how to apply them in practice. It also covers conflict of interest navigation, reliable sourcing, talk page engagement, and the consensus-based editing culture that determines what stays and what gets deleted.

How to Master Wikipedia's Editing Rules

-

1

Learn Wikipedia's three core content policies

Wikipedia's editing rules rest on three non-negotiable pillars: neutrality, verifiability, and no original research. These policies work together to keep the encyclopedia reliable, well-sourced, and free of bias. Understanding how they interconnect will help you anticipate why edits get removed and how to write content that survives review.

-

2

Avoid adding original research

Wikipedia does not accept unpublished analysis, personal conclusions, or novel ideas—even if they are well-reasoned or factually sound. Every claim you add must be traceable to an existing, published source rather than your own interpretation. Review your edits carefully to ensure you are reporting what sources say, not synthesizing a new argument from them.

-

3

Identify and use reliable sources

Not all references meet Wikipedia's verifiability standard, so blog posts, press releases, and self-published material are generally not acceptable. Prioritize peer-reviewed research, established news organizations, and recognized academic or institutional publications. Before saving an edit, confirm that each claim is backed by a source Wikipedia's community would consider credible.

-

4

Write with neutrality in every edit

Wikipedia's neutrality policy requires that articles present all significant viewpoints fairly without promoting one perspective over another. Avoid promotional language, loaded adjectives, or framing that favors a particular outcome. Keep in mind that fringe views do not receive the same weight as well-supported scientific or scholarly consensus.

-

5

Navigate conflicts of interest ethically

If you are editing an article about your employer, a client, or yourself, you are operating in conflict-of-interest territory that Wikipedia takes seriously. Disclose your relationship transparently and consider proposing changes on the article's talk page rather than editing directly. Working collaboratively with the editing community in these situations protects both your credibility and the integrity of the article.

-

6

Engage productively on talk pages

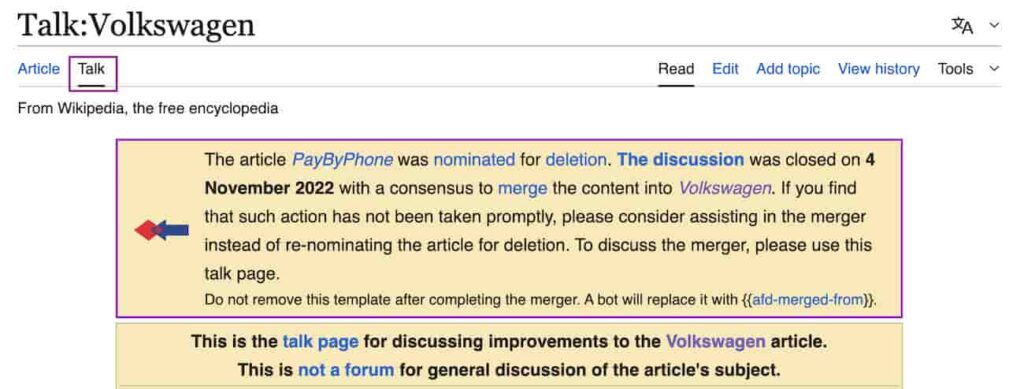

Talk pages are the behind-the-scenes discussion boards where editors debate, justify, and negotiate changes to articles. Use them to explain your reasoning, ask questions, and understand why previous edits were reverted. Participating constructively in these spaces builds goodwill with other editors and gives you insight into the community's expectations.

-

7

Work within Wikipedia's consensus model

Wikipedia decisions are rarely made unilaterally—changes to contested content are typically resolved through community consensus rather than individual persistence. Instead of repeatedly reverting edits, engage with other contributors to find common ground that satisfies Wikipedia's policies. Understanding this collaborative model will help you work with the community rather than against it.

-

8

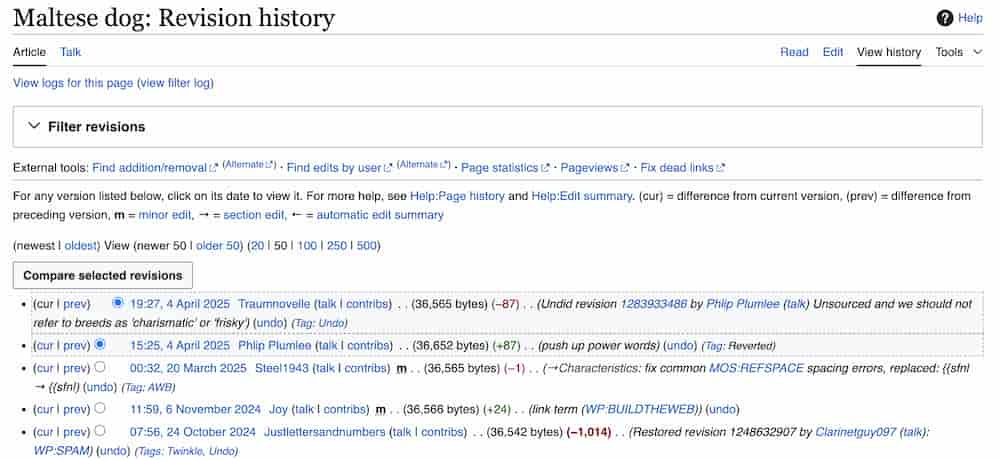

Use available tools to monitor and improve your edits

Wikipedia offers resources such as watchlists, edit history logs, and experienced editor mentorship programs to help you track and refine your contributions. Monitoring article changes after you edit allows you to respond quickly if content is flagged or removed. Leveraging these tools keeps you informed and helps you build the expertise needed to make edits that consistently meet Wikipedia's standards.

Mastering Wikipedia’s rules doesn’t just protect your edits—it gives you the confidence to create content that sticks. With a solid grasp of the platform’s guidelines, and by using the available Wikipedia resources, you’ll not only minimize frustration but also contribute meaningfully to one of the internet’s most trusted spaces.

Understanding Wikipedia’s Core Content Policies

Wikipedia operates on a foundation of three core content policies that function as its guiding principles: neutrality, verifiability, and the prohibition of original research.

These policies are non-negotiable and interdependent, meaning they collectively ensure the encyclopedia remains reliable, well-sourced, and free of bias. If you’ve ever wondered why Wikipedia doesn’t read like a Reddit debate or a personal blog, these policies are the reason. They aren’t just rules—they’re the backbone of how Wikipedia maintains its credibility as a source of information.

When you dig into these policies, it becomes clear that they have practical, real-world applications you’ll encounter constantly while editing. Take neutrality, for example. At its core, the neutrality policy is about balance—it mandates that articles don’t promote one perspective over another but present all significant viewpoints fairly. This doesn’t mean giving equal weight to every opinion, though. A fringe theory with no basis in reliable research doesn’t get the same treatment as a well-regarded scientific consensus. This distinction is key, and one that Wikipedia editors consistently enforce to avoid turning articles into battlegrounds for advocacy.

Verifiability is another cornerstone. It’s not enough to believe something is true or even personally know it to be true—on Wikipedia, every claim needs to be backed by a reliable source. This is where citations come into play, and why you’ll often see those little superscript numbers in articles leading to books, journals, or credible websites. Verifiability saves Wikipedia from being a platform for unchecked assertions and forces contributors to root their edits in evidence.

Finally, there’s the policy against original research. Wikipedia isn’t the place to break news, publish a personal analysis, or introduce an undiscovered concept. This policy often trips up well-meaning editors who may have unique insights they’re eager to share, but Wikipedia draws a firm line: it’s a summary of existing, published knowledge, not a forum for creating something new.

What’s fascinating is how intertwined these policies are. One naturally supports and reinforces the others. Verifiability complements neutrality; without reliable sources, there’s no way to ensure an article adequately reflects opposing viewpoints. Similarly, banning original research keeps the platform focused on documenting established information rather than veering into speculative or biased territory.

Understanding these core policies isn’t just about following rules—it’s about recognizing the values that make Wikipedia a unique space for global knowledge-sharing. Once you grasp these essentials, it’s easier to navigate the platform’s expectations and contribute edits that hold up to community scrutiny. For a deeper look at how Wikipedia’s content standards intersect with your online presence, see our guide on how Wikipedia shapes online reputation far beyond search.

What Is Original Research on Wikipedia?

If you’ve ever dabbled in Wikipedia editing, you’ve probably heard the term “original research” thrown around, often with an air of caution. It’s a cornerstone of the platform’s content policies, yet it can feel frustratingly abstract when you’re diving into guidelines for the first time. At its core, the original research rule is about protecting the neutrality and credibility of the encyclopedia by ensuring that everything on Wikipedia is backed by verifiable, published sources—not the personal insights or interpretations of its contributors.

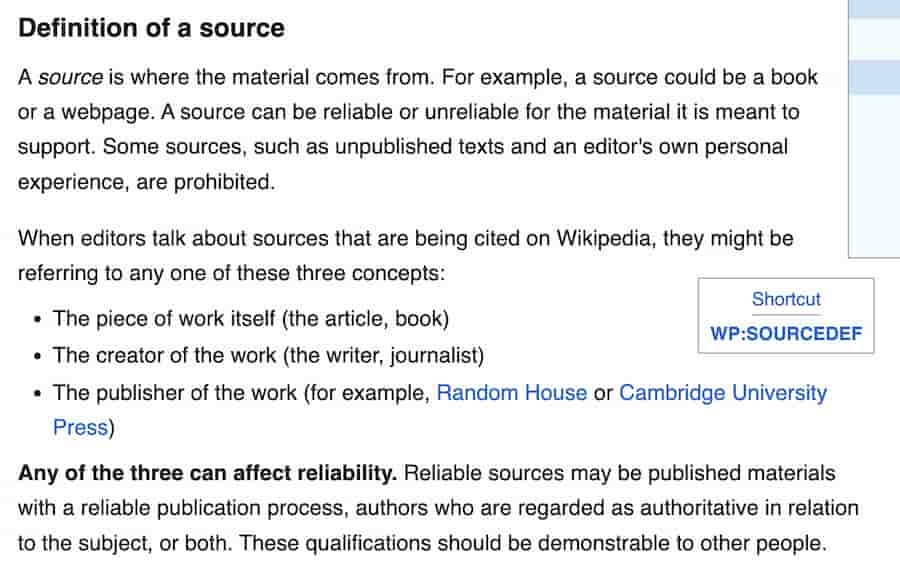

Definition of Original Research

Wikipedia defines original research, or OR, as material that has not been published in a reliable source.

This encompasses content that hasn’t undergone some form of external scrutiny or review before appearing on Wikipedia. Original research isn’t just about publishing a groundbreaking study on an obscure topic; it can be as subtle as introducing your interpretation of existing data or drawing conclusions not explicitly stated in the sources you’re using. The platform is built on the principle of summarizing what’s already out there in a way that remains neutral and factual.

This is where the challenges for enthusiastic editors tend to pop up. It’s easy to view yourself as adding value by synthesizing information from different sources or providing detailed commentary. But if your wording or emphasis strays beyond what the sources explicitly say, Wikipedia considers that your own analysis—and that’s where you tip into original research.

Examples of What Constitutes Original Research

Sometimes the concept of original research feels abstract until you see it in action. Consider a scenario: you’re working on improving the Wikipedia page for a scientific discovery. You find multiple sources discussing the breakthrough, but none of them explicitly connect the implications to a current political debate. Drawing that connection on your own—even if it seems logical—constitutes original research because the conclusion didn’t come from the published sources you’re citing.

Get a Free Reputation Assessment

Find out what people see when they search for you online. No obligation — results in 24 hours.

Another common example involves combining statistics in creative ways. Taking population growth data from two sources and calculating an additional trend line to project future numbers might feel helpful—but unless your secondary sources make that same calculation, your synthesized result is original research. Even the way you group or juxtapose information can inadvertently step into this territory if the connections are uniquely yours rather than something verified in a reliable source.

How to Avoid Original Research in Your Edits

Avoiding original research doesn’t require a complete overhaul of your editing instincts—it just demands a strong commitment to rigorous sourcing and staying true to your materials. The first rule of thumb is to let your sources do the talking for you. Summarize their content without embellishing, speculating, or adding context beyond what is plainly in the text. If you find yourself writing something that feels like an interpretation, pause and ask: “Does this conclusion appear explicitly in the source I’m citing?” If the answer is no, revisit your edit.

Another useful habit is working from secondary sources rather than primary ones, especially if you’re new to Wikipedia editing. Secondary sources have typically been vetted by experts or peers and provide the kind of contextual analysis that Wikipedia relies on. Citing a scholarly article that discusses the impact of a historical treaty is far safer than using the treaty’s text itself unless a secondary source provides the same interpretation.

Transparency matters too. Always ensure your edit clearly reflects the source you’re using without paraphrasing in a way that adds unintended meaning. Don’t “clean up” overly academic language into something more dramatic or opinionated for the sake of readability—it risks introducing bias or distortion.

Finally, lean into Wikipedia’s collaborative structure. Talk pages on each article are invaluable when working on tricky subjects that walk the fine line between summary and original research. Discussing your approach with other contributors helps build consensus and ensures your edits align with Wikipedia’s rules.

By staying anchored in verifiable sources and resisting the urge to infuse your own knowledge or perspective, you’re not just avoiding original research—you’re actively contributing to the trustworthiness and reliability of Wikipedia as a resource for millions worldwide.

Need Help Getting a handle on Wikipedia for Your Brand?

Wikipedia edits gone wrong can damage your online reputation. Our team understands the rules and knows how to work within them to protect and improve how you’re represented.

Verifiability on Wikipedia: What It Means and Why It Matters

If you’ve ever taken a peek behind the scenes at Wikipedia’s vast collection of articles, one key principle keeps it all afloat: verifiability. It’s not just a rule buried deep in Wikipedia’s policy pages—it’s the backbone of what makes Wikipedia a reliable resource. But what does “verifiability” actually mean in practical terms?

Think of verifiability as Wikipedia’s commitment to ensuring every fact and claim on the platform can be checked and traced back to a credible, published source. It’s not about whether something is objectively true—because defining “truth” can get tricky in an encyclopedia built collaboratively. Instead, the question boils down to whether a reasonable editor could verify the information by consulting a reputable source.

The Concept of Verifiability in Wikipedia

Wikipedia defines verifiability simply: information must be backed by sources that readers can consult to confirm accuracy.

The mantra here is “verifiability, not truth.” This doesn’t mean Wikipedia doesn’t care about accuracy—it does. But the goal is to keep the playing field even by requiring every assertion, no matter how seemingly obvious, to link back to a clear and accessible foundation. Even if everyone agrees that the Eiffel Tower is one of the most famous landmarks in the world, the statement still needs a citation from a reliable source to remain on Wikipedia.

This standard creates a chain of accountability. It ensures editors aren’t slipping in their own opinions or unverifiable claims, and it makes edits easier for other contributors to review. Nothing enters Wikipedia without the ability to check that it came from somewhere credible.

Sources Considered Reliable for Verifiability

Not every source that looks professional on the surface is automatically suitable for Wikipedia. Verifiability hinges heavily on the idea of “reliable sources.” These are typically secondary sources—think well-established newspapers, academic journals, peer-reviewed studies, or books put out by reputable publishers. Independent reporting and expert analysis are what Wikipedia holds as gold-standard citations. Citing an article from The New York Times or Nature carries more weight than using a quick blog post from an unknown website.

Wikipedia editors treat self-published sources—like personal blogs or websites people curate themselves—with extreme caution. Such sources are generally avoided unless the person behind them is a recognized expert writing within their specific field. A renowned scientist’s blog on quantum mechanics might work in certain cases; a random Reddit thread discussing the same topic will not.

Primary sources—like government reports, court documents, or firsthand accounts—can be used sparingly but with care. Wikipedia favors secondary sources because they tend to provide more context, analysis, and perspective, which aligns with its role as a tertiary resource summarizing existing knowledge. Understanding what qualifies as a strong reference is also essential if you’re working toward securing media coverage that can serve as Wikipedia references.

Common Mistakes to Avoid When Ensuring Verifiability

Verifiability, while central, isn’t free from pitfalls. One common error is assuming that because something is widely known or shared across social media, it must be verifiable. Wikipedia doesn’t operate on the “everyone knows this” principle. Editors need to find sources that meet the platform’s reliability standards—if a claim is worth including, a credible source should be able to back it up.

Another frequent misstep involves using cherry-picked sources that push a particular narrative. Citing an advocacy organization to support a controversial claim might not work if the source lacks impartiality. Reliable sources should be as neutral and well-regarded as possible.

Finally, pay close attention to accessibility when choosing citations. Sources behind paywalls or from extremely niche publications can be problematic. While not outright forbidden, editors are encouraged to prioritize sources that are either free to access or broadly available. Wikipedia thrives on transparency—not just for editors, but for its readers.

Staying Neutral and Compliant on Wikipedia

At its core, Wikipedia thrives on one of its most important guiding principles: neutrality. This concept, encapsulated in the Neutral Point of View (NPOV) policy, ensures Wikipedia doesn’t become a collection of opinions or promotional material. Instead, it remains an continuously updated source of verified information that serves all readers, not just a specific audience or interest group.

Actively maintaining neutrality in your edits can feel like walking a tightrope, especially when dealing with contentious topics or areas where you might unknowingly carry your own biases.

Understanding Wikipedia’s Neutral Point of View (NPOV) Policy

The NPOV policy isn’t just a suggestion—it’s a non-negotiable rule that applies to every single entry, whether the topic is historical events, scientific theories, or a local business’s profile. Wikipedia expects content to be written in a way that does not promote any viewpoint, ideology, or agenda. It’s about presenting facts fairly and proportionally, based on reliable sources, while steering clear of language that carries implicit judgment or favoritism.

Consider an article about climate change. Even if the scientific consensus overwhelmingly supports certain conclusions, neutrality doesn’t mean giving undue space to fringe theories for the sake of balance. However, it does require that alternative viewpoints—if covered by credible sources—are mentioned appropriately, without dismissive language or disproportionate weight. Neutrality is not about “equal airtime”—it’s about fair representation based on the strength of the evidence.

Tips to Maintain Neutrality in Your Edits

Here are actionable strategies to keep your edits compliant with the neutrality policy:

- Stick to reliable sources: If you’re relying on sensational headlines, opinion pieces, or sources with clear biases, your edit is likely to become problematic. Always check that your references align with Wikipedia’s criteria for reliability—peer-reviewed journals, reputable news outlets, or expert consensus, depending on the subject.

- Use dispassionate language: Words have weight. Terms that carry emotional or judgmental tones—like “obviously,” “revolutionary,” or “controversial” when unexplained—can undermine the neutrality of an entry. Replace such language with matter-of-fact descriptions grounded in your sources.

- Balance perspectives carefully: Wikipedia uses a principle called “due weight,” which asks editors to reflect the prominence of an idea based on available and reliable sources. If 95% of sources support a certain perspective and only 5% argue against it, presenting these views as equally supported would be misleading.

- Be self-critical about bias: Even when we don’t intend to, our prejudices—whether political, cultural, or personal—can creep into our writing. Revisit your edits after a short break with fresh eyes, or use Wikipedia’s Talk Pages to invite feedback from fellow editors who can point out areas where you might unintentionally tip the scale.

Consequences of Violating the Neutrality Rule

Failing to adhere to neutrality can lead to far-reaching repercussions, not just for an individual editor but for the integrity of Wikipedia as a whole. Blatantly promotional language is a red flag for other editors and administrators, often resulting in your edits being swiftly reverted. If the violation is severe or recurrent—such as persistent bias in politically sensitive articles—it could lead to temporary or permanent editing restrictions.

Neutrality violations can also harm Wikipedia’s reputation as a trusted information source. If a company’s article reads too much like marketing copy, readers might question whether it’s a legitimate, unbiased encyclopedia or a platform susceptible to outside manipulation. To understand how Wikipedia’s content directly affects how your brand is perceived, read our overview of how Wikipedia affects brand reputation.

Ultimately, mastering neutrality isn’t just about meeting a checkbox requirement—it’s about contributing to Wikipedia’s credibility and ensuring your work becomes a lasting part of a global knowledge system.

Conflict of Interest (COI) on Wikipedia: What You Need to Know

COI, or Conflict of Interest, is a term you’ll see often if you’re looking to make any sort of contribution to Wikipedia. It might sound like a legal concept or something reserved for corporate boardrooms, but on Wikipedia, COI is all about preserving the integrity of the platform by discouraging editors from making changes that could be biased by personal, financial, or professional loyalties.

Wikipedia operates on trust. The platform relies on its contributors to build, edit, and refine articles in a way that’s factual, neutral, and community-driven. When someone with a stake in the topic—whether it’s a company employee editing the company’s Wikipedia page, a publicist adding accolades to a celebrity’s profile, or even a diehard fan describing their favorite video game—makes an edit, that trust can slip. The concern isn’t that these editors have bad intentions; it’s that their involvement creates the perception of bias, which Wikipedia tries very hard to avoid.

What Is a Conflict of Interest (COI) on Wikipedia?

According to Wikipedia’s guidelines, a COI isn’t just about obvious ties, like working for a company or being related to someone mentioned in an article. It can also apply to situations where you have an indirect but significant interest in how the topic is presented. For instance, running a blog or managing social media for a niche fan community might compel you to fine-tune an article in a way that aligns with your group’s views—the kind of edits that could subtly skew the information, even unintentionally.

This doesn’t mean you’re barred from editing pages related to topics you care about. What it does mean is that you need to tread carefully. Recognizing where your personal involvement might introduce bias is the first step to mastering COI rules.

Recognizing and Declaring COIs in Your Edits

Transparency is the golden rule when it comes to handling a potential COI on Wikipedia. If you feel your connection to the subject might cloud your neutrality—or if others might perceive it that way—it’s important to disclose the relationship upfront, so the community can assess your contributions with full context.

Let’s say you’re tasked with updating your employer’s Wikipedia page. The most ethical approach isn’t to dive straight into the “edit” button but rather to use the article’s Talk page to propose changes. By doing so, you’re allowing impartial editors to review and implement the most relevant and accurate information. You might say something like: “Hi, I work for XYZ Company, and I noticed the current article is missing some updated stats from this independent report [link provided].” Declaring your role builds credibility and demonstrates to the community that you’re following the rules.

Even if you’re not directly editing but sharing content with someone else who contributes on your behalf, you’re still responsible for disclosing that connection. Wikipedia editors are an attentive bunch, and failing to be upfront about a COI can backfire, leaving your edits—or worse, your entire account—under scrutiny for potential bias.

Strategies to Manage COI Situations

How do you contribute meaningfully and ethically when you have a COI? One strategy is to approach edits not as a direct participant but as someone who suggests improvements. The Talk page is your best friend. If you can clearly outline the changes needed, back them up with reliable third-party sources, and explain why they’d improve the content, other editors are likely to step in and implement them.

Another approach is reframing how you view Wikipedia contributions altogether. Instead of trying to reflect positively on a subject you’re connected to, focus on improving the article according to its gaps. Are there facts missing? Could an existing claim use better citations? These kinds of contributions lower the perceived conflict and align your efforts with Wikipedia’s commitment to accurate, verifiable content.

One final note: patience is key. Edits proposed or made with a COI may face extra scrutiny, and that’s okay. Trust the process, collaborate with the editing community, and remember that the end goal is an article that is complete, credible, and reflective of Wikipedia’s principles. For a comprehensive look at how COI rules work in practice, see our guide on mastering Wikipedia’s editing rules: COI explained.

Best Practices for Editing Within Wikipedia’s Rules

Editing Wikipedia might feel like walking a tightrope at times—balancing factual accuracy, neutrality, and adherence to the platform’s intricate policies. Beyond understanding the core principles like verifiability, neutrality, and avoiding original research, there are practical techniques that can help you edit effectively while respecting Wikipedia’s guidelines.

How to Navigate Wikipedia’s Talk Pages

If Wikipedia articles are a space where information is presented, Talk Pages are the virtual workshop where contributors collaborate, debate, and fine-tune that information. These discussion pages, found by clicking the “Talk” tab at the top of any article, serve as a platform for editors to hash out disagreements, propose changes, and explain their reasoning.

When exploring Talk Pages, approach them with a spirit of collaboration. If you have a suggested edit that might be contentious—like revising how a controversial topic is framed or adding data from a newer, lesser-known source—broach the subject on the Talk Page first. State your reasoning clearly, referencing Wikipedia’s policies where possible, such as citing WP:NPOV (Neutral Point of View) or WP:RS (Reliable Sources). Engaging this way not only builds consensus but also highlights your willingness to follow protocol.

Every comment should be respectful and constructive. Sign your contributions with four tildes (e.g., ~~~~), which auto-generates your username and timestamp, reinforcing transparency in your communication. Even if your proposal isn’t accepted immediately, treat the feedback as an opportunity to improve your understanding of Wikipedia’s norms.

Understanding Wikipedia’s Consensus Model

Wikipedia doesn’t operate on a simple majority-rules system; instead, it relies on consensus. Substantive changes to articles require general agreement among editors, even if that agreement isn’t unanimous. Consensus isn’t just about vote counts on Talk Pages—it’s about reasoning and adherence to Wikipedia’s principles.

Let’s say you want to update an article about a recent scientific breakthrough. Even if two or three editors oppose the edit, your argument might still prevail if it’s well-supported by reliable sources and aligns with Wikipedia’s guidelines. Conversely, even ten editors agreeing isn’t enough if the proposed content doesn’t meet standards like verifiability or neutrality.

A great way to foster consensus is by proactively engaging with other editors on Talk Pages, explaining your edits thoroughly in Edit Summaries, and being open to compromise. Consensus is an ongoing process of give-and-take that rewards patience and diplomacy. If disputes escalate, our guide on resolving disputes and conflicts on Wikipedia walks through the formal processes available to editors.

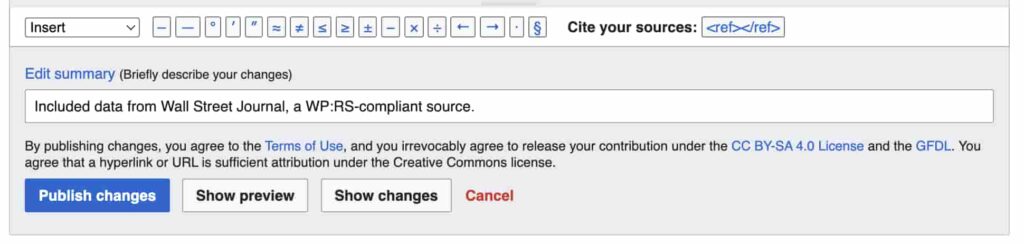

The Role of Edit Summaries and Revision Histories

Every time you hit “Publish changes” on Wikipedia, you’ll notice a small box prompting you for an Edit Summary. Don’t skip this step. A concise, clear summary of your edit not only keeps you accountable but also helps other editors quickly understand what changes have been made and why.

Think of your Edit Summary as a digital courtesy note to the rest of the Wikipedia community. If you’re correcting a typo, a quick “Fixed typo” suffices. For more substantive changes, go beyond the basics. If you’ve rephrased a section to improve neutrality, note it: “Adjusted language for NPOV compliance on [topic].” If you’ve added new information, add a relevant note: “Included data from [Source Name], a WP:RS-compliant source.” This transparency builds trust and minimizes confusion or disputes later.

Coupled with Edit Summaries, Wikipedia’s revision history also ensures accountability. Any article’s “View history” tab allows you—and anyone else—to see a complete log of edits, including yours. Every change, even reversals, is preserved for public scrutiny.

When you piece all these practices together—constructive Talk Page usage, fostering consensus, and leaving thoughtful Edit Summaries—you’re not just playing by Wikipedia’s rules; you’re actively contributing to a culture of responsible, community-driven editing.

Tools and Resources for Mastering Wikipedia’s Editing Rules

Navigating Wikipedia’s web of editing policies can feel like learning a new language—it’s intricate, nuanced, and requires practice to get right. But just like any skill, having the right tools and resources at your disposal makes all the difference. Whether you’re a newcomer trying to make your first contribution or a seasoned editor refining your technique, Wikipedia offers a surprising array of resources to guide you.

Recommended Guidelines and Reference Materials

If you’ve ever felt a little overwhelmed by Wikipedia’s dense policy pages, you’re not alone—many editors admit it can feel like drinking from a fire hose. The good news is, Wikipedia provides streamlined

Frequently Asked Questions

Protect Your Online Reputation

Every day you wait, negative content gets stronger. Talk to our experts about a custom strategy for your situation.

Get Your Free Analysis